Predicting human face attributes from images with deep learning

30 May 2016 | image recognition transfer learning MatConvnet Deep learning is prooved powerful in solving many problems, but requires plenty of data and computational resources which unfortunately few possess (the likes of Google, facebook and Amazon, etc..). The good news is that those who don’t have these resources can still benefit from deep learning using transfer learning techniques. Transfer learning alows one to use a model trained on a task X and fine-tune it for another task Y (not that different from X), using a small dataset related to task Y. One still needs to find a pretrained deep model available for use.

Deep learning is prooved powerful in solving many problems, but requires plenty of data and computational resources which unfortunately few possess (the likes of Google, facebook and Amazon, etc..). The good news is that those who don’t have these resources can still benefit from deep learning using transfer learning techniques. Transfer learning alows one to use a model trained on a task X and fine-tune it for another task Y (not that different from X), using a small dataset related to task Y. One still needs to find a pretrained deep model available for use.

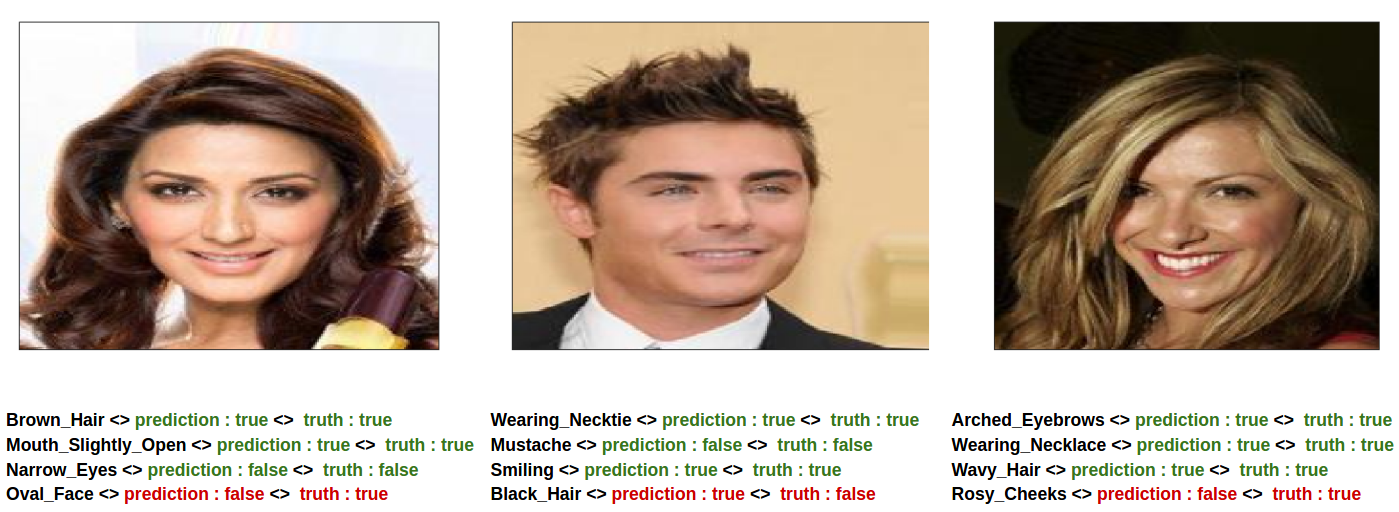

For the final project of an image recognition course (at KTH), I used deep Convolutional Neural Networks (CNN) to predict human attributes like skin tune, hair color and age from a face image. I used a dataset of face images annotated with facial attributes (40 attributes per image), called CelebA, to fine-tune a CNN pre-trained for a general object classification task (a VGG CNN trained for the ImageNet challenge), to predict facial attributes given a face image. As a baseline, a linear classifier was trained for attribute prediction using representations extracted from the pre-trained CNN. When trained on 20% of CelebA dataset (~40K images), the fine-tuned CNN achieved an average accuracy of ∼ 89.9% predicting 40 different attributes per image. MatConvNet deep learning framework was used.

Check this document for the deep network details, the fine-tuning procedure, the conducted experiments and a discussion on the results.

Comments